Articles

Engineering judgment, multiplied by AI

Featured + latest

Top picks—then the rest of the library.

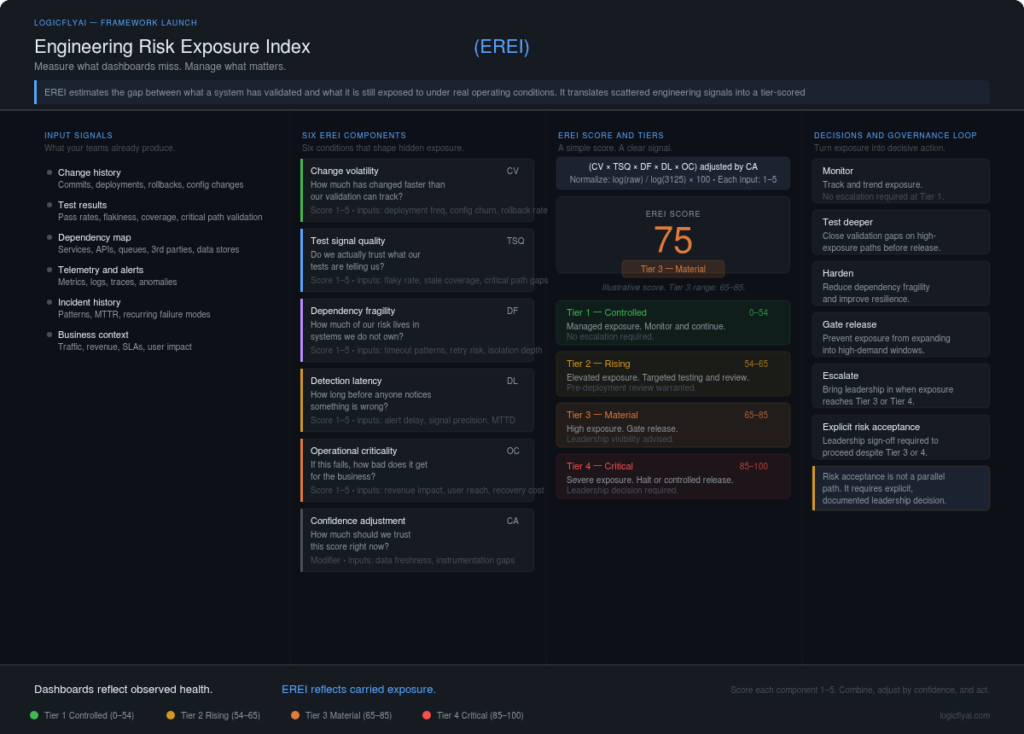

The Engineering Risk Exposure Index (EREI): Measuring What Dashboards Miss.

A large software platform enters a high-value demand window. Traffic climbs fast. Checkout volume rises. Shared services run hotter than usual. Test suites passed earlier in the day. Deployment telemetry looked normal. Error rates are still within tolerance. Then, under sustained load, three services degrade in sequence. Not because one obvious defect slipped through. Not […]

Read Article →

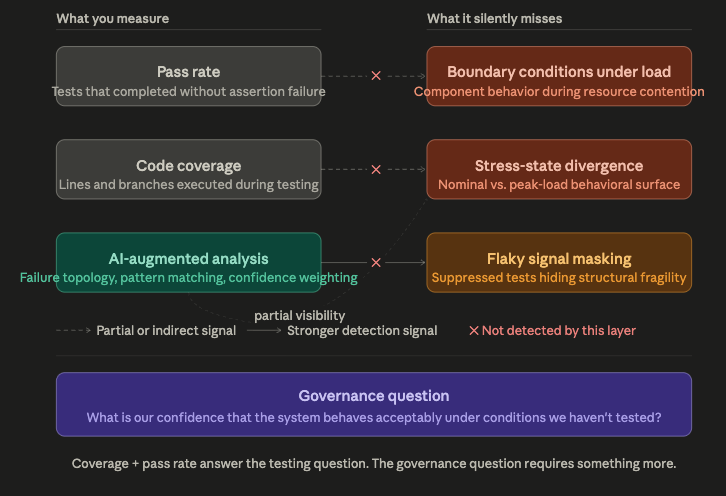

Green Dashboards, Hidden Exposure: Why Coverage Metrics Mislead

The dashboards looked clean. Ninety-four percent test pass rate. Eighty-one percent code coverage. Build pipelines green across every environment. The engineering org had spent months hardening its test suite, and the numbers reflected that effort. Leadership reviewed the metrics in quarterly planning and felt good about the trajectory. Then traffic spiked—a scheduled promotion, a regional […]

Read Article →QA as Risk Intelligence: Reframing Quality Engineering’s Strategic Role

The system shipped clean. Every test suite passed. Code review was thorough, deployment ran without incident, and the monitoring dashboards stayed green through the first 48 hours post-release. Two weeks later, under a load spike that engineering had seen before—not an edge case, not an anomaly—three interconnected services began degrading in sequence. Response latency climbed. […]

Read Article →

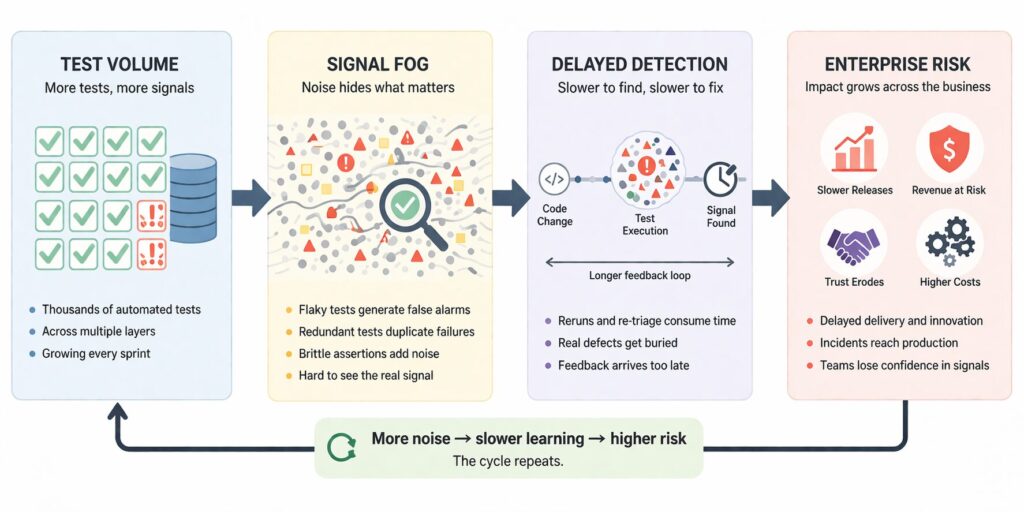

When More Tests Create Signal Fog

A release train looks healthy on paper. The pipeline is full. Thousands of checks run on every merge. Coverage numbers look respectable. Automation is expanding. Yet engineers are stuck rerunning jobs, scanning familiar failures, and arguing over whether a red build means product risk or test instability. The dashboard says the system is controlled. The […]

Read Article →

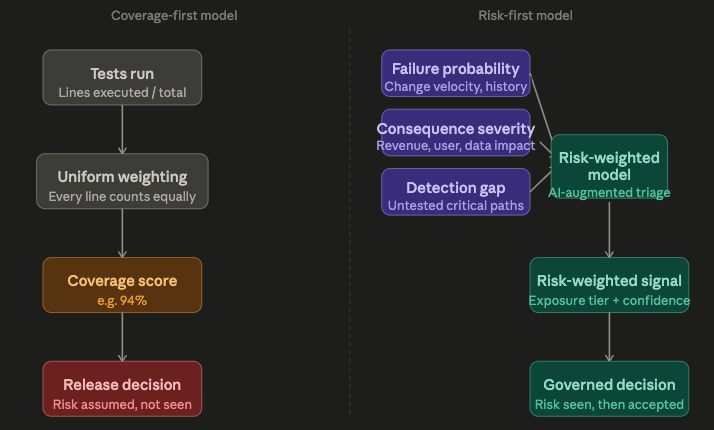

Test Automation Isn’t Broken—Your Risk Model Is

The dashboards looked fine. A SaaS-like platform. Thousands of tests. A CI pipeline that had been green for weeks. A coverage report sitting comfortably above ninety percent. The engineering team had invested seriously in automation—multiple test suites, staged environments, disciplined release gates. Then a high-traffic event hit. A checkout path that processed the heaviest transaction […]

Read Article →

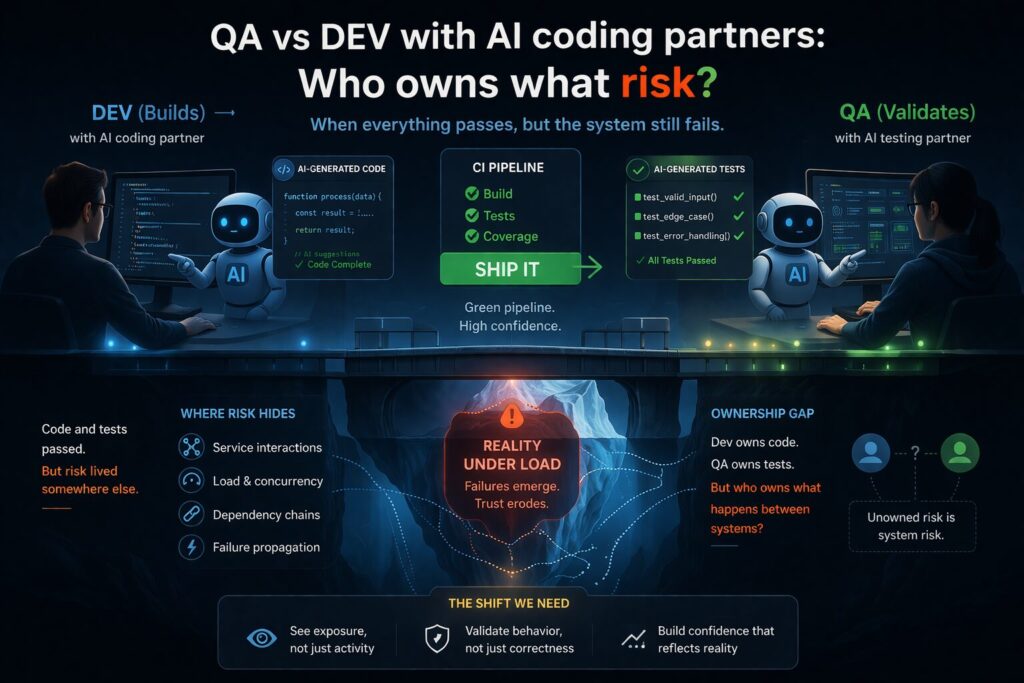

When validation agrees—but reality disagrees

A feature is delivered faster than expected. An AI coding partner helped generate parts of the implementation. It also suggested unit tests. The pull request moved quickly—reviewed, approved, merged. CI pipelines were clean: Days later, under peak usage, the system begins to struggle. Latency increases. Retries accumulate. Downstream services degrade. Eventually, parts of the system […]

Read Article →

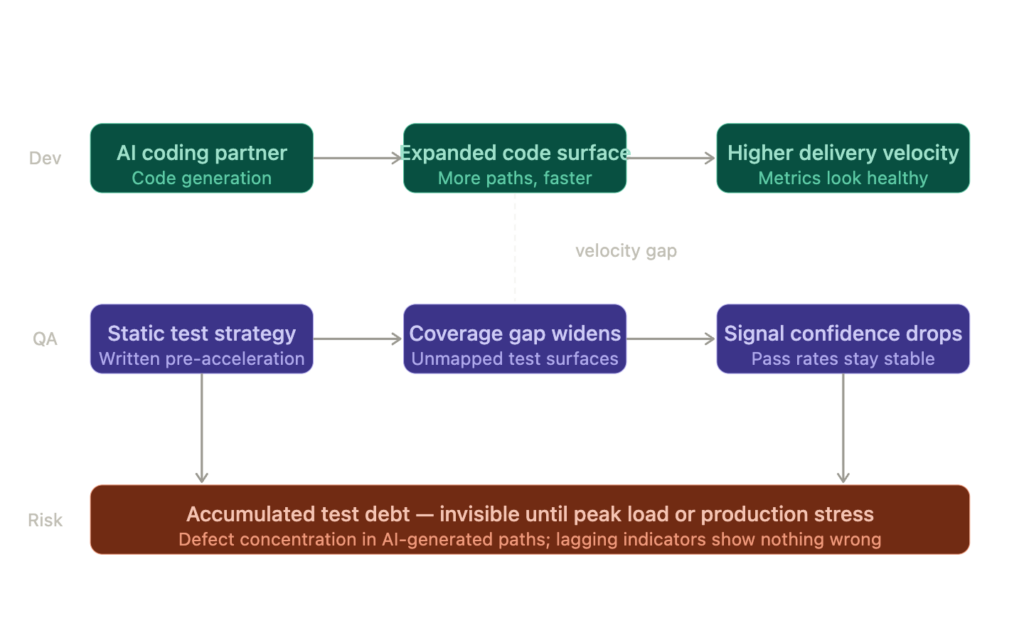

The Developer-QA AI Divide: When Coding Partners Outpace Test Strategy

The dashboards told a reassuring story. Velocity was up. Sprint completion rates had climbed steadily over the previous quarter. Defect escape rate — the team’s go-to health signal — remained flat. The engineering organization was producing more, faster, and the numbers confirmed it. Then a release hit peak traffic and broke in a way no […]

Read Article →

Dashboards were green. Customers still lost trust.

A peak window hits. The service status page is quiet. Uptime looks normal. Average latency is within the target band. Error rates are “acceptable.” Yet customers can’t complete a critical flow. Some get timeouts. Others see partial success. A few try again and make it through — which makes the problem harder to describe and […]

Read Article →

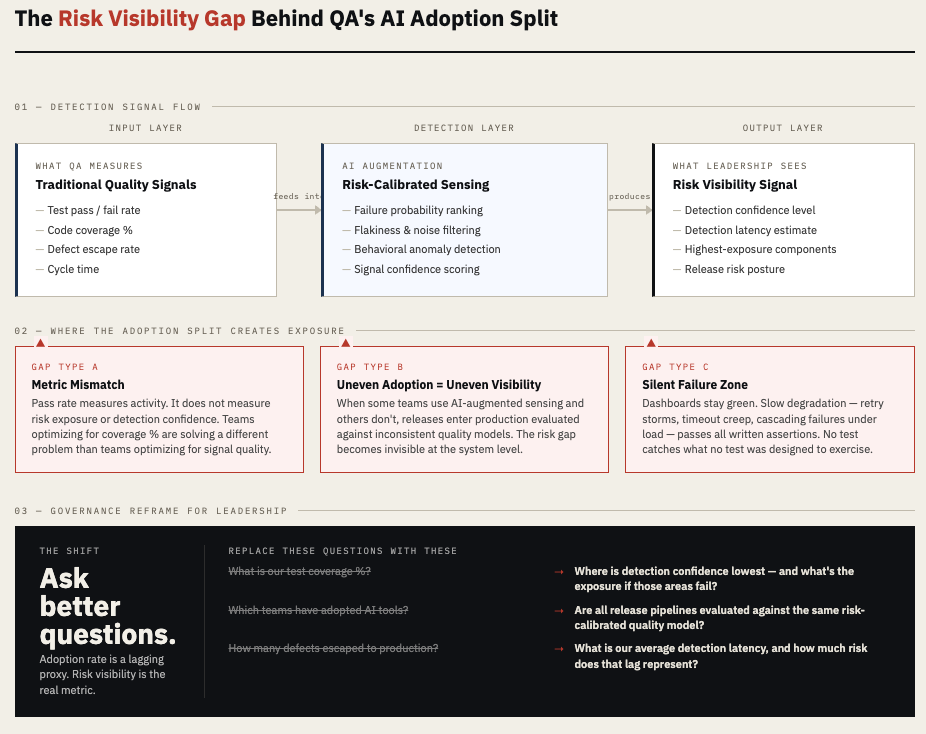

Why QA Teams Are Split on AI Adoption (And What That Reveals)

The dashboards showed 94% test pass rates. Coverage hovered above threshold. Velocity was stable. Then a large SaaS platform’s checkout flow degraded under peak traffic. Not a crash — a slow, compounding failure. Timeouts crept up. Retry storms followed. Revenue leaked quietly for four hours before anyone connected the dots. The QA team had run […]

Read Article →

Hello, world!

I’m starting this site as a learning diary I can keep consistently—part research notebook, part field notes. I learn best by writing: capturing what I’m studying, what I’m developing, what I’m testing, what surprised me, and how my thinking changes over time. This will be a place for personal exploration shared in public: • small […]

Read Article →