The dashboards looked fine.

A SaaS-like platform. Thousands of tests. A CI pipeline that had been green for weeks. A coverage report sitting comfortably above ninety percent. The engineering team had invested seriously in automation—multiple test suites, staged environments, disciplined release gates.

Then a high-traffic event hit. A checkout path that processed the heaviest transaction volume of the year took a route through the system that had never been exercised under load. It was covered—code execution data confirmed it. But the test that touched that path was a shallow unit check that ran against mocked dependencies. The real failure mode—a race condition between two upstream services under concurrent write pressure—existed nowhere in the suite.

The dashboards stayed green until production started failing.

No one had made a bad decision. They had made a reasonable decision with a broken measuring instrument.

The Coverage Religion

Somewhere in the past two decades, the software industry made a quiet but consequential mistake. It adopted code coverage percentage as a primary quality signal—and then treated it like a risk signal.

These are not the same thing.

Coverage percentage answers one question: what share of your code was executed at least once during testing? That is a useful operational fact. It is not, however, an answer to the questions that actually matter before a release: where is failure most likely? How severe is the consequence if it happens? And how long would it take to detect?

The coverage number became a target. Teams pursued it. Leaders tracked it. Release gates were built around it. And because it was measurable and moved in the right direction with effort, it felt like progress.

The problem is that coverage is a measure of completeness—not a measure of safety. You can achieve ninety-five percent coverage on a codebase and still have your highest-risk execution paths untested, your most consequence-bearing components covered only by tests that cannot detect the failures that actually matter, and your detection latency sitting at whatever the gap is between merge and incident report.

What Coverage Actually Measures

Coverage tells you three things. It does not tell you the three things you need.

It tells you execution breadth: which lines ran. It does not tell you failure probability: which components are most likely to break given your current change pattern, dependency topology, and operational history.

It tells you test presence: a test exists that touches this code. It does not tell you test adequacy: whether that test can detect the failure mode you actually face.

It tells you current state: how much was covered in the last run. It does not tell you risk trajectory: whether your unexecuted paths are growing riskier as the system evolves around them.

A high-coverage codebase with poor test design on its most critical paths is not a low-risk system. It is a high-risk system with good-looking metrics.

This distinction matters because engineering decisions—resource allocation, release timing, tech debt sequencing—are made against quality signals. If the signal is misleading, the decisions carry hidden exposure.

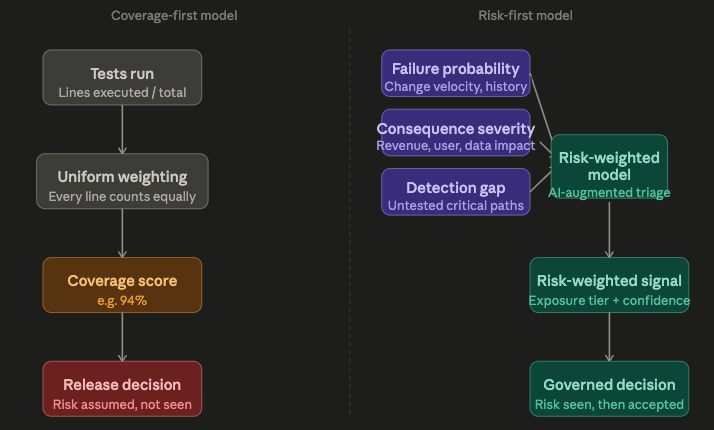

The Mental Model Shift: Risk First, Coverage Second

The correction is not to abandon coverage measurement. It is to stop treating coverage as the primary ordering principle for test investment.

A risk-first model starts with a different question. Not “what percentage of the code do we test?” but “where in this system does a failure carry the highest combination of probability, consequence, and detection latency?”

Those three factors, taken together, describe your actual exposure:

- Failure probability is shaped by change velocity (how often this area is touched), historical defect density, dependency complexity, and the number of concurrent execution paths.

- Consequence severity is shaped by what this component does—payment processing, authentication, data integrity operations, user-facing critical flows. Not all code is equal. A failure in a billing calculation path carries different stakes than a failure in a tooltip animation.

- Detection gap is the interval between when a failure occurs and when your testing infrastructure would find it—if it would find it at all. A component that is covered but only by tests that cannot exercise it under realistic load or concurrent state has a large detection gap regardless of its coverage percentage.

Risk = probability × consequence × detection gap.

That expression does not replace test design or engineering judgment. But it gives you a priority ordering that coverage alone cannot provide.

Where AI Fits—and What It Is Not Doing

AI does not fix test automation by writing more tests. That framing produces higher coverage numbers, not lower risk exposure.

The more accurate description of what AI-augmented testing can do is this: it acts as a risk-signal classifier. It helps you identify where your existing suite is pointing at the wrong things, where your detection gaps are growing, and which untested or under-tested paths are accumulating risk faster than others.

Specifically:

Change-velocity weighting. Commit history, code churn, and dependency graphs can be analyzed to identify which components are changing frequently without corresponding increases in test coverage or quality. A module with high churn and stale tests is a risk indicator, not just a maintenance note.

Failure-signal classification. Not all test failures carry equal information. AI classification can distinguish between failures that represent real defect signals and failures that represent test environment noise, brittle assertions, or flaky infrastructure. Reducing noise in your failure signal improves confidence in what the suite is actually telling you.

Execution path risk scoring. Code paths vary in how often they are exercised, how many dependencies they cross, and how severe their failure consequences are. AI can surface paths where the combination of those factors exceeds what the current test suite monitors. That is not automatic coverage—it is visibility into where coverage strategy needs to shift.

Confidence calibration. A test suite that gives you ninety-four percent confidence in a low-risk area and fifty percent confidence in a high-risk one is not a high-confidence system. AI augmentation can reweight your confidence score by the risk distribution, not just the coverage distribution.

None of this changes what engineers and architects do. It changes what they can see.

The Enterprise Translation

Leaders who sign off on releases against coverage-based quality gates are making risk decisions. The question is whether they are making those decisions with information about the risk—or with information about the measurement.

There is a structural reason this matters beyond individual release calls. When an engineering team under delivery pressure has to demonstrate quality, they optimize toward the metric that defines quality. If that metric is coverage percentage, the rational response is to write tests that increase coverage. Those tests may or may not address the system’s actual risk surface. Under time constraints, they often do not.

This is not a failure of discipline. It is a predictable response to a poorly constructed incentive.

The downstream effect is that technical risk accumulates in the gaps between what coverage measures and what failure actually requires. That accumulation is invisible on standard dashboards. It surfaces when conditions change—traffic spikes, infrastructure upgrades, third-party dependency updates, novel transaction patterns—and the untested execution paths finally encounter the state they were never designed to handle.

By then, the question is not whether to invest in better test strategy. The question is how much the incident cost.

Governing Risk Exposure, Not Coverage Numbers

The governance shift this requires is not complex, but it does require a change in what leaders ask for.

Instead of “what is our coverage percentage?” the more useful question is: “what share of our identified high-risk surface is under active, adequate detection?”

That question produces different conversations. It requires engineering teams to map risk—not just coverage. It creates accountability for detection gap, not just test existence. And it makes the tradeoff visible: you can have high coverage and low risk-surface detection, or you can invest in the coverage that actually corresponds to consequence.

Three questions worth embedding in release governance:

- Where are our highest-risk execution paths, and what is the quality of test coverage specifically there?

- What is our detection latency on failure in those paths—how long between production failure and engineering awareness?

- What is our confidence score on the signals our suite is currently generating—are we distinguishing real defects from test noise?

None of this requires new tooling immediately. It requires a shift in what you track and what questions you ask of the data you already have.

Coverage expansion is not the goal. Risk surface reduction is.

The Takeaway

High coverage tells you how much of your code is touched by tests. It does not tell you how much of your risk is actually managed.

The two numbers are related, but they are not the same—and treating them as equivalent is how organizations end up with green dashboards and production incidents in the same release cycle.

The shift is not from “test more” to “test less.” It is from “test evenly” to “test according to where exposure actually lives.” AI augmentation supports that shift by improving the visibility of risk signals that coverage metrics structurally miss.

The organizations that govern this well are not the ones with the highest coverage. They are the ones with the clearest picture of where their risk surface is, how much of it is under active detection, and what it would cost if it were not.

Sources / Further Reading

- ISO/IEC 29119 Software Testing Standard — risk-based testing framework

- NIST Software Assurance Reference Dataset (SARD) — defect pattern data

- “Prioritizing Manual Test Cases in Traditional and Rapid Release Environments” — Hemmati et al., empirical software engineering research on test selection

- Google Testing Blog: “Testing on the Toilet” series — practical signal-quality framing

- Accelerate: The Science of Lean Software and DevOps — Forsgren, Humble, Kim — change failure rate and detection latency as delivery metrics