The dashboards told a reassuring story.

Velocity was up. Sprint completion rates had climbed steadily over the previous quarter. Defect escape rate — the team’s go-to health signal — remained flat. The engineering organization was producing more, faster, and the numbers confirmed it.

Then a release hit peak traffic and broke in a way no one had anticipated. Not because of a known risk. Because of an unknown one. An AI-assisted code path — auto-generated, quickly reviewed, merged under pressure — had never been meaningfully tested. Not through neglect. Through assumption. Everyone believed someone else had mapped that surface.

No one had.

The velocity gap no one names

When developers began adopting AI coding partners, the conversation focused on productivity: faster code generation, reduced boilerplate, accelerated context switching. The gains were real. AI-assisted developers produce more code, more quickly, with fewer low-level syntax errors.

What followed was quieter and less discussed: QA teams didn’t adopt AI at the same rate. A 2023 survey by Gartner noted uneven adoption across software development functions, with test and quality functions lagging development in AI tooling maturity. The reasons are understandable — test strategy involves nuanced judgment, domain context, and risk prioritization that early AI tools weren’t built to support well.

The result is structural: a delivery velocity gap has opened between what development produces and what QA can meaningfully verify.

This isn’t a culture problem. It’s an engineering risk architecture problem.

Why your current metrics won’t catch it

Most engineering teams track defect escape rate, test pass rate, and code coverage percentage. These are not useless. They are, however, trailing indicators — they report on what already happened, not on the risk accumulating in what hasn’t been tested yet.

When AI tools accelerate code generation, three things happen simultaneously that your standard metrics don’t capture:

Test surface expands faster than test strategy. AI-generated code often introduces logic paths, edge case handling, and dependency interactions that weren’t explicitly designed by a human. The test plan wasn’t written for them. Coverage tools will report a percentage — but that percentage is measured against the tests that exist, not against the test surface that should exist.

Signal confidence degrades without warning. A test suite written against a manually authored codebase has a certain implied validity. When the underlying code accelerates dramatically in volume and complexity, those tests may no longer probe the highest-risk paths. They still pass. They just stop being the right tests.

Assumption drift accumulates between dev and QA. In slower delivery cycles, QA teams have time to understand what changed and why. Under AI-accelerated velocity, the handoff becomes more compressed. QA is reviewing output of a system — the dev + AI pair — rather than a human decision process they can interrogate.

None of these failure modes show up as a defect. They show up as gaps in coverage you don’t know you have.

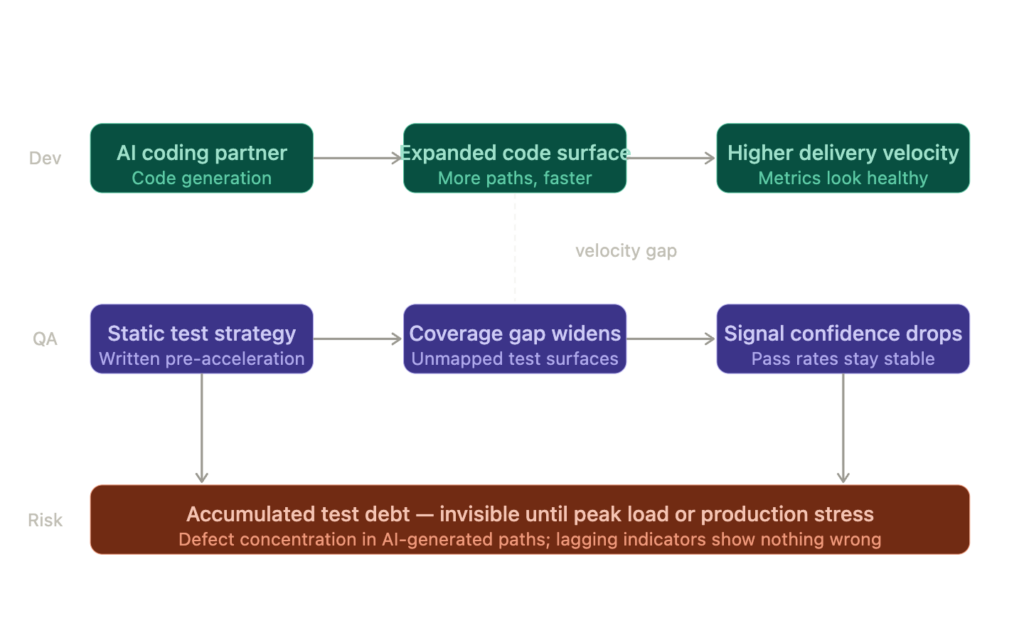

Here’s the structural view of how this gap forms and what it produces:

The pattern is consistent across teams: development accelerates, test coverage strategy doesn’t update in parallel, and the gap between what was tested and what was shipped widens silently until stress exposes it.

The collaboration friction layer

Developer-QA collaboration friction isn’t new. What’s new is the mechanism generating it.

When developers manually write code, a QA engineer can interrogate intent. They can read the logic, ask questions, map risk surfaces. The collaboration has friction, but it’s human-to-human friction — traceable and correctable.

When a developer is working with an AI coding partner, the artifact they hand to QA is different in character. It may contain logic structures the developer didn’t explicitly author. It may have handled edge cases the developer didn’t consciously consider. It may have introduced dependencies the developer didn’t fully review under time pressure.

This creates three specific friction points:

Test surface assumption drift. QA builds test plans based on what they expect the code to do. AI-generated code may do more — or different — things than the developer’s verbal handoff implies. The QA team is testing against a mental model of the code, not the code itself.

Prompt-generated logic opacity. When logic emerges from a prompt interaction rather than from deliberate design, its failure modes aren’t always obvious. The developer may not fully understand why the AI generated a particular implementation, which means they can’t reliably communicate the risk surface to QA.

Review compression under velocity pressure. Faster delivery pressure creates shorter review windows. The time available for QA to interrogate the code, map risk, and design appropriate coverage shrinks precisely as the code volume grows.

How AI can sense the gap itself

Here’s where the conversation shifts from diagnosis to engineering: AI isn’t just the cause of this dynamic. It can also be a sensor for it.

The same AI capabilities used in development — classification, anomaly detection, pattern recognition — can be applied to detect collaboration gap indicators before they become defects.

Specifically, AI can be used to:

Measure test surface expansion rate versus test plan update rate. If code commits are accelerating but test specifications are not being updated proportionally, the gap is quantifiable. This is a risk reweighting signal — not a defect indicator, but a structural exposure indicator.

Flag coverage confidence degradation. When AI can compare the logical structure of newly generated code against existing test coverage, it can surface paths with low or no test coverage that didn’t exist before the AI-generated commit. This moves coverage analysis from backward-looking (what percentage passed) to forward-looking (which surfaces are now unmapped).

Detect handoff compression signals. By analyzing the time between commit, review, and test specification update, AI can flag releases where the collaborative validation loop has been shortened below a threshold historically correlated with defect concentration.

Identify assumption drift through semantic analysis. AI-assisted analysis of pull request descriptions, QA test plans, and code diffs can surface divergence — where the stated intent doesn’t map to what the code will actually do under stress.

None of this replaces QA judgment. It extends it. The QA engineer still decides what matters. The AI surfaces what they might not have the visibility to see under velocity pressure.

What this costs at the enterprise level

A single uncovered code path at the right moment — peak load, launch day, regulatory audit — carries risk that compounds quickly.

Consider the enterprise exposure profile when this gap is allowed to widen systematically:

Test debt accumulates as a balance sheet liability. Every release cycle where coverage doesn’t keep pace with code adds to a latent defect backlog that isn’t visible in any standard metric. This isn’t theoretical — it’s a concentration of defect risk in untested AI-generated paths that will surface under stress.

The trust cost is disproportionate. When a production failure occurs in a path that “should have been tested,” the trust erosion with customers and internal stakeholders doesn’t scale linearly with the severity of the failure. It scales with the perception that the failure was preventable — and failures in AI-accelerated code paths will increasingly be seen as exactly that.

Speed as risk amplifier. The business case for AI-accelerated development assumes that faster delivery creates competitive advantage. That case is valid when delivery velocity is matched with risk visibility. When it isn’t, velocity becomes a risk amplifier — defects accumulate faster, surface faster, and at greater scale.

What leadership should govern

Engineering leaders managing AI adoption often focus on adoption metrics: how many developers are using AI tools, what productivity gains are being captured, where rollout is stalling. These are valid inputs.

They’re not sufficient governance.

What the developer-QA AI divide demands is a different governance conversation: one focused on the collaboration gap velocity — the rate at which development AI capability is outpacing QA AI maturity, and the risk exposure that gap is accumulating.

Concretely, this means engineering leadership should be asking:

How current is our test strategy relative to our code surface? Not “what is our test coverage percentage,” but “when was our test strategy last updated relative to the pace of AI-assisted code generation?”

Where is our signal confidence lowest? Which parts of the codebase have the highest proportion of AI-generated code and the lowest corresponding test depth? That intersection is the risk concentration point.

What does the dev-QA handoff actually look like under velocity pressure? Is QA genuinely reviewing AI-generated code for risk, or reviewing it for known patterns while assuming AI-generated logic is structurally similar to manually authored code?

How would we detect this gap before it becomes a defect? If your current process has no mechanism for surfacing coverage gaps in AI-generated code paths, that absence is a governance gap — not a QA gap.

The Engineering Risk Exposure Index — a structured scoring framework I’ll introduce in a coming post — provides one mechanism for quantifying and governing this exposure. But the governance instinct needs to come first: the right question is not “are we covered?” but “do we know where we’re not covered, and at what confidence level?”

The takeaway

When AI coding partners accelerate development faster than QA teams can adapt their test strategy, the result isn’t faster delivery. It’s invisible risk accumulation that surfaces under stress, at scale, in front of the people you can least afford to disappoint.

The gap is real, it’s measurable, and it’s governable — but only if your organization is asking the right questions before the dashboards go red.

Sources / Further reading

- Gartner: AI Augmentation in Software Development Lifecycle (2023 survey)

- IEEE Software: Test Coverage Adequacy in AI-Assisted Development — ongoing research thread

- ISTQB AI Testing guidance: https://www.istqb.org/certifications/artificial-intelligence-tester

- Google Engineering Practices: Code Review Developer Guide — https://google.github.io/eng-practices/

- DORA Research: Accelerate: State of DevOps (annual) — https://dora.dev