A release train looks healthy on paper. The pipeline is full. Thousands of checks run on every merge. Coverage numbers look respectable. Automation is expanding. Yet engineers are stuck rerunning jobs, scanning familiar failures, and arguing over whether a red build means product risk or test instability.

The dashboard says the system is controlled. The delivery team feels the opposite.

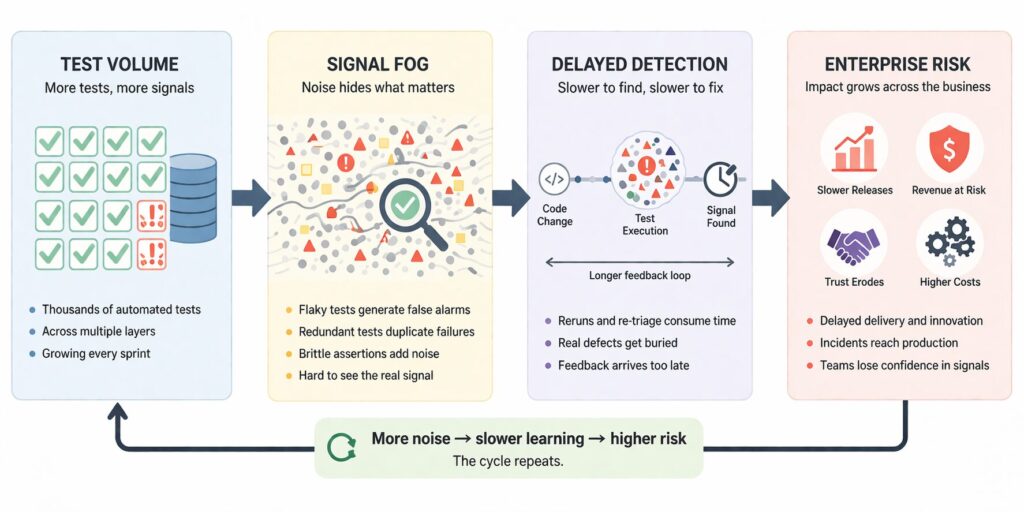

That pattern is more common than many teams admit. Test growth is usually treated as proof of quality maturity. But after a certain point, adding more tests without improving signal quality can make a system harder to trust. The suite gets larger. The interpretation burden gets heavier. Real defects take longer to isolate. The team collects more output while gaining less clarity.

That is the central problem: more tests can increase noise and delay.

This is not an argument against automation. It is an argument against confusing test volume with risk visibility.

Why more tests feels like the safe answer

The instinct makes sense. If some testing is good, more testing should feel safer. More checks seem to mean more coverage, more validation, and fewer surprises. Teams under delivery pressure often default to accumulation because adding a test is easier than questioning whether the existing suite is still producing useful information.

That logic works at first. A growing suite often does catch new regressions, raise confidence, and improve release discipline.

Then the suite matures. The product changes. Infrastructure shifts. Ownership gets distributed. Old assumptions stay in place. Tests begin to overlap. Some depend on unstable environments. Some assert behavior that no longer matters. Some fail intermittently for reasons unrelated to product risk. Few are removed because deleting a test feels dangerous, even when the test is already costing more trust than it provides.

At that stage, the test suite stops behaving like a clean sensing system. It starts behaving like a crowded signal channel.

The real problem is not volume. It is signal fog.

Signal fog appears when the effort required to interpret test results grows faster than the value of the results themselves.

A noisy test system does not simply waste compute. It changes human behavior. Engineers start discounting failures because many are familiar and non-actionable. Build breaks lose urgency. Reruns become routine. Investigation shifts from “What changed?” to “Is this one real?” Over time, the team becomes slower not because it lacks checks, but because it lacks trustworthy meaning.

That is why flaky tests matter so much. Their damage is not limited to occasional false alarms. Their deeper damage is cognitive. They teach engineers that red does not always mean risk. Once that trust erodes, even valid failures arrive inside an already degraded channel.

The result is subtle but serious: the suite still produces activity, but it no longer produces proportionate clarity.

How test growth turns into delay

There are several common ways this happens.

Flaky tests distort confidence

A flaky test fails inconsistently without a corresponding product defect. Sometimes the issue is timing. Sometimes shared state. Sometimes environment drift. Sometimes external dependency behavior. The exact cause varies, but the effect is consistent: uncertainty enters the pipeline.

One flaky test is annoying. A collection of flaky tests changes system behavior. Teams rerun pipelines, compare runs, and wait for a stable pattern before making a decision. That extra time is not neutral overhead. It increases detection latency.

Redundant tests create false assurance

Many suites expand by layering similar checks across unit, integration, API, UI, and end-to-end levels without a clear sensing purpose. The same defect can trigger five failures in five places, while another risk remains weakly observed.

From a distance, that looks like thoroughness. In practice, it can create a failure surface that is wider than it is informative. Engineers see many red indicators, but they do not necessarily get better localization or clearer causality.

Brittle assertions increase maintenance drag

Some tests pass only when the world behaves exactly as expected, even if small variations are harmless. They may assert formatting details, ordering quirks, or timing behavior that is not central to user or system risk. These tests tend to break often and explain little.

When that happens at scale, the suite becomes expensive not only to run, but to interpret and maintain.

Slow suites compress feedback value

Even reliable tests lose value when they return information too late. A test that detects risk six hours after a change is not equivalent to one that detects risk in six minutes. Large suites often grow without a corresponding strategy for execution timing, failure routing, or prioritization.

So the delay problem has two layers: too many questionable signals, and too much time before the useful ones become clear.

Why common metrics can mislead

This is where many organizations get trapped. They measure what is easy to count rather than what actually improves decision quality.

Consider a few familiar metrics:

| Common metric | What it tells you | What it often misses |

|---|---|---|

| Total test count | The suite is growing | Whether the added tests improve clarity |

| Automation percentage | More checks are automated | Whether those checks are reliable or meaningful |

| Pass rate | Most tests passed on a run | Whether repeated failures are trusted or ignored |

| Coverage percentage | Code or flows are exercised | Whether important exposure is observed well |

| Defect escape count | Some production failures were prevented or missed | How much noise delayed earlier detection |

These metrics are not useless. They are incomplete.

A team can increase automation, expand coverage, and still lose visibility into real exposure if signal quality declines. In fact, that is often how the problem hides. The organization sees growth in measurable activity and assumes risk visibility improved. Meanwhile, the people operating the pipeline know the truth: the suite is getting louder, not sharper.

That is why “more tests” can feel productive while quietly slowing the system.

What signal fog does to engineering teams

Signal fog changes more than dashboards. It changes operating behavior.

First, it increases triage latency. Engineers spend more time deciding which failures matter before they can begin fixing anything. That pushes real detection later in the cycle.

Second, it increases context switching. Instead of following a clean path from failure to diagnosis, engineers bounce between logs, reruns, historical patterns, and informal team memory. That interrupts delivery flow.

Third, it reduces shared trust. Developers start questioning QA signals. QA starts defending the suite rather than improving it. Platform teams inherit recurring instability. Leaders hear that “tests failed” without knowing whether the issue is product exposure, environment instability, or test architecture debt.

Fourth, it rewards workaround behavior. Teams retry until green, mute noisy checks, or accept uncertainty to keep flow moving. Those responses are understandable, but they gradually normalize weak signal quality.

This is how a testing problem becomes an engineering systems problem.

AI can help, but only as a sensing layer

AI is useful here, but only if it is framed correctly.

It is not a substitute for test design. It does not repair unstable architecture by itself. It does not turn weak checks into good ones. What it can do is improve how the organization reads noisy test output.

That matters because the first failure in many delivery systems is not lack of data. It is inability to interpret data fast enough.

Here are the most practical ways AI helps.

Failure classification

AI can group failures into categories such as likely infrastructure instability, dependency drift, product regression, environment setup issue, or historically flaky pattern. That reduces the time engineers spend doing first-pass sorting.

Signal clustering

Large suites often produce many related failures from one underlying issue. AI can cluster similar signatures across jobs, services, and layers, helping teams see one root pattern instead of treating each failure as a separate event.

Confidence scoring

Not every red result should carry equal weight. AI can attach confidence indicators based on prior runs, environment context, recent changes, and known instability patterns. That helps teams distinguish strong signals from weak ones.

Risk reweighting

A suite usually treats failures as binary. Pass or fail. Real systems are not that simple. AI can help re-rank failures by probable user impact, service criticality, blast radius, and time sensitivity. That changes triage from raw volume management to exposure-focused investigation.

This is the important distinction: AI does not make the system safe. It helps the team see which signals deserve attention first.

The deeper issue is exposure visibility

A quiet but important mistake in many organizations is assuming that testing exists mainly to generate confidence. In practice, testing should do something more precise: reveal exposure early enough, clearly enough, and reliably enough for a decision to be made.

Once you look at testing that way, the problem with excess noisy volume becomes obvious.

A test suite is not just a collection of checks. It is part of the organization’s sensing architecture. Its job is not to produce maximum output. Its job is to surface meaningful changes in risk.

That is why a smaller, cleaner, trusted suite can be more valuable than a larger one that constantly blurs the signal. The question is not whether the pipeline is busy. The question is whether the pipeline helps the team distinguish real exposure from background instability.

What engineers should measure instead

If test count and pass rate are not enough, what should teams pay more attention to?

A more useful view includes measures like these:

- Failure trust rate: how often a failed test leads to actionable product or infrastructure work

- Triage latency: how long it takes to classify a failure with reasonable confidence

- Duplicate signal density: how often one issue creates many near-identical failures

- Flake recurrence: how often the same unstable tests consume team time across runs

- Detection timing: how early meaningful failures appear relative to code change and release decision points

- Signal clarity: how easily a failure points engineers toward a probable cause

These measures are harder to collect than raw counts. They are also closer to the real problem.

A healthy quality system does not just execute tests. It produces timely, interpretable evidence.

Why this matters beyond the engineering floor

Signal fog is easy to dismiss as a local annoyance. It is not.

At the enterprise level, noisy test systems create three forms of risk.

The first is decision delay. Release readiness becomes harder to judge. Leaders wait longer for confidence, or ship with less of it.

The second is resource distortion. Skilled engineers spend valuable hours separating noise from meaning instead of reducing real failure exposure.

The third is trust erosion. When quality signals become hard to interpret, leadership either overreacts to noise or underreacts to real warnings. Neither outcome is stable.

This is where technical testing debt becomes a governance problem. If the organization cannot tell which failures matter, then investment, prioritization, and release decisions are being made on degraded information.

That is not just a tooling issue. It is a risk visibility issue.

The leadership question is not “How many tests do we have?”

A better leadership question is: How much trustworthy signal do our tests produce?

That shift sounds small, but it changes what gets funded and reviewed.

It pushes teams to retire low-value checks instead of only adding new ones.

It pushes platform owners to address recurring instability as a reliability issue, not a nuisance.

It pushes engineering leaders to look at detection clarity and delay, not just suite size.

It pushes governance toward exposure visibility rather than activity reporting.

In other words, mature oversight does not reward test growth by itself. It rewards the ability to see real risk sooner and more clearly.

That is the point many organizations miss. A large suite can create the appearance of control while hiding the exact thing leadership needs most: timely understanding of where the system is actually fragile.

A more disciplined way to think about testing

The goal is not fewer tests as a matter of principle. The goal is a better signal system.

That usually means asking harder questions:

- Which tests repeatedly create ambiguity without improving detection?

- Which failures arrive often but rarely change engineering action?

- Which checks are overlapping rather than complementary?

- Where does the suite reveal critical exposure too late?

- Which red results are trusted immediately, and which trigger skepticism?

Those questions help teams move from test accumulation to signal design.

That is a healthier frame for modern engineering. Especially as systems become more distributed, release velocity increases, and AI-generated code expands overall change volume, the cost of noisy detection rises. Teams do not just need more verification. They need a sensing model that stays interpretable under pressure.

Closing thought

More tests can absolutely increase safety. But only when they increase useful visibility.

Once signal quality falls, added volume can bury the very risks the suite was meant to expose. The dashboard stays active. The pipeline stays full. The organization keeps measuring activity. Yet the real system becomes harder to read.

That is why flaky tests and signal fog deserve more respect than they often get. They are not minor workflow annoyances. They are symptoms of a sensing layer that no longer matches the decisions the organization needs to make.

When that happens, the answer is not blind expansion. It is better signal discipline.

Because the job of a test system is not to be loud.

It is to be trusted.