A feature is delivered faster than expected.

An AI coding partner helped generate parts of the implementation. It also suggested unit tests. The pull request moved quickly—reviewed, approved, merged.

CI pipelines were clean:

- All tests passed

- Coverage improved

- No obvious regressions

Days later, under peak usage, the system begins to struggle.

Latency increases. Retries accumulate. Downstream services degrade. Eventually, parts of the system fail in sequence.

Nothing obvious was “broken.”

But something was clearly unaccounted for.

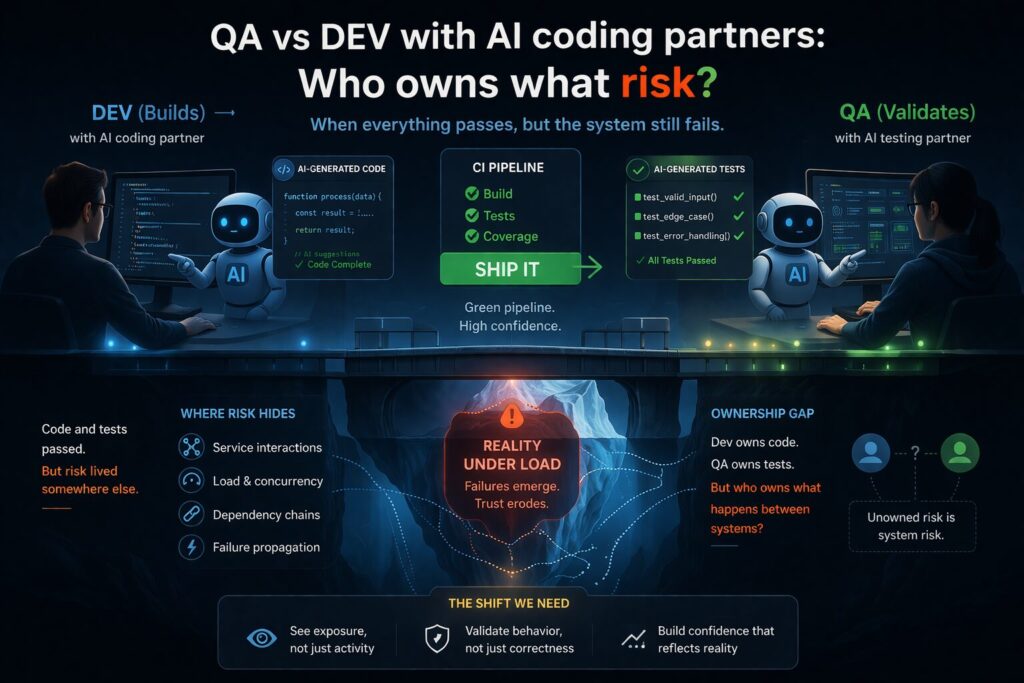

The Dev vs QA model assumes separation that no longer exists

The traditional model is simple:

- Developers build

- QA validates

This model relies on a key property:

Independence between creation and verification.

AI coding partners weaken that independence.

Now:

- Code may be generated or heavily assisted

- Tests may also be generated or suggested

- Both can share the same assumptions, patterns, and blind spots

This creates a subtle but critical condition:

The system can appear correct because its validation agrees with its construction.

Not because it is correct under real conditions.

A new failure pattern: correlated correctness

AI introduces a failure mode that is easy to miss.

Code and tests agree—but on incomplete assumptions

AI-generated tests often:

- follow code structure closely

- validate expected paths

- miss interaction complexity

This leads to:

- High coverage

- High pass rates

- Low real-world confidence

Because what is being validated is:

- internally consistent

- but externally incomplete

AI doesn’t just accelerate output—it amplifies signals

If your signals are strong, AI helps.

If your signals are weak or biased, AI scales that weakness.

Three shifts become visible:

1. Confidence grows faster than evidence

More code.

More tests.

Faster approvals.

But not necessarily:

- better understanding

- better coverage of system behavior

2. Review depth decreases

Human reviewers shift toward:

- pattern recognition

- surface-level validation

Because:

- “Tests are passing”

- “It looks consistent”

AI reduces friction—but also reduces scrutiny.

3. System behavior is under-modeled

Most validation still focuses on:

- unit correctness

- service-level expectations

But failures occur in:

- service interactions

- concurrency

- retries and cascading dependencies

These are rarely captured in generated tests.

Why your current metrics are giving you false comfort

Organizations still rely on familiar indicators:

- Test pass rate

- Code coverage

- PR cycle time

- Defect counts

Under AI-assisted development, these signals degrade further.

Because they measure:

- Activity, not exposure

- Agreement, not independence

- What was tested, not what was missed

A green pipeline now often means:

“All generated assumptions were internally consistent.”

That is not the same as system reliability.

So who owns the risk?

This is where the conversation usually stalls.

- Dev says: “The code works.”

- QA says: “The tests passed.”

Both are correct—within their scope.

But the failure didn’t occur within those scopes.

It occurred:

- between services

- under load

- across dependencies

In other words:

The risk existed outside role-based ownership.

Reframing the problem: from ownership of work to visibility of exposure

Instead of asking:

- Who owns the code?

- Who owns the tests?

A more useful question is:

- Where does the system carry unobserved risk?

- How visible is that risk before it turns into failure?

This shift matters more in AI-assisted environments because:

- development is faster

- validation is more coupled

- signals appear stronger than they actually are

A simple way to think about exposure

At a practical level, risk in a system tends to grow based on four factors:

Risk = Probability \times Impact \times Detection\ Latency \times Confidence\ Adjustment

In plain terms:

- Probability — How likely is this failure condition?

- Impact — What happens if it occurs?

- Detection Latency — How quickly will we notice it?

- Confidence Adjustment — How much should we trust our signals?

AI affects all four:

- It can reduce perceived probability (because tests pass)

- It does not reduce actual impact

- It often increases detection latency (failures appear under stress)

- It inflates confidence (because validation is not fully independent)

This creates a gap:

Signals improve faster than actual understanding of system behavior.

What this means in practice

You don’t need more process.

You need clearer visibility into where risk accumulates.

Three practical shifts:

1. Stop treating passing tests as a proxy for safety

Passing tests show:

- alignment with expectations

They do not show:

- coverage of real-world conditions

2. Question the independence of validation

Ask:

- Were tests influenced by the same system that generated the code?

If yes:

- treat confidence as lower than it appears

3. Focus on system interactions, not just components

Prioritize validation around:

- service boundaries

- retries and fallbacks

- dependency behavior

This is where failures emerge.

Enterprise translation: the velocity illusion

From a leadership perspective, this creates a subtle but important risk.

- Delivery appears faster

- Metrics look healthier

- Failures feel unexpected

This leads to:

- reduced trust during incidents

- increased scrutiny after failure

- hesitation in future releases

In effect:

Local speed improves, but system predictability declines.

Governance: what should actually change

The response should not be more approvals or more tests.

That increases noise without improving signal.

Instead, leadership should focus on three questions:

1. Where is the system most exposed?

Not where most work is happening—but where failure is most likely and least visible.

2. What evidence exists under stress conditions?

Not just correctness in isolation, but behavior under:

- load

- failure

- dependency strain

3. How reliable are our confidence signals?

If validation is not independent:

- confidence should be discounted

Especially in AI-assisted workflows.

Closing thought

AI coding partners did not create new categories of failure.

They changed how quickly those failures surface—and how well they are hidden beforehand.

- Code and validation can now agree more easily

- Metrics can improve without reducing risk

- Systems can fail in places no one explicitly owns

The question is no longer:

“Did Dev or QA miss this?”

It is:

“Where was the risk—and how visible was it before failure?”