The dashboards showed 94% test pass rates. Coverage hovered above threshold. Velocity was stable.

Then a large SaaS platform’s checkout flow degraded under peak traffic. Not a crash — a slow, compounding failure. Timeouts crept up. Retry storms followed. Revenue leaked quietly for four hours before anyone connected the dots.

The QA team had run their suite the morning before. Everything was green.

What broke wasn’t the test coverage number. What broke was the assumption that those numbers meant something meaningful about risk. And when leadership asked why AI-assisted testing hadn’t caught it — the QA team itself was divided on whether AI belonged in their process at all.

That split is worth examining. Not because adoption debates are interesting, but because what’s underneath that split tells you something precise about organizational risk exposure.

The Split Is Real, and It Runs Deep

Walk into most mid-to-large QA or quality engineering teams today and you’ll find three distinct groups.

The first group has embraced AI-assisted tooling — test generation, anomaly flagging, flakiness detection — and moved fast. They’re experimenting, iterating, and building intuition about what AI does well and where it misleads them.

The second group is skeptical, often for legitimate reasons. They’ve watched AI-generated tests that looked complete but covered trivial paths. They’ve seen flakiness detection that surfaced noise rather than signal. Their skepticism is earned, not reactionary.

The third group — arguably the largest — is watching and waiting. They’re not opposed. They’re uncertain. They don’t yet have a framework for evaluating what AI-assisted testing would actually change about their risk posture.

Each of these groups is doing something rational given what they know. That’s important context. This isn’t primarily a change management problem or a training gap, though both matter. It’s a measurement problem. These teams don’t have shared language for what they’re actually trying to improve.

Why the Split Is a Risk Visibility Signal

Here’s the reframe: when a QA organization is fractured on AI adoption, it’s often because the team lacks a shared, precise definition of what “better testing” means in risk terms.

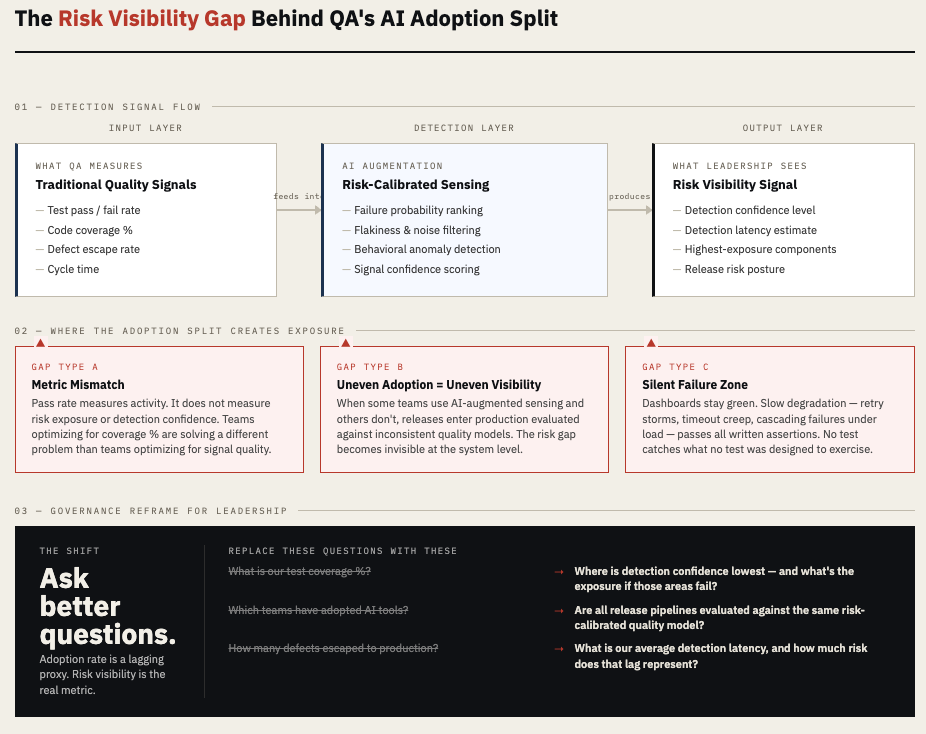

Pass rate is not a risk metric. It’s an activity metric. It tells you how many tests ran and what proportion completed without a failure assertion. It does not tell you how much of the system’s actual failure surface was exercised, how confident you should be in those results, or where exposure sits if a subset of those tests were fragile to begin with.

When teams don’t agree on what signal they’re trying to improve, they can’t agree on whether AI improves it. One engineer evaluates AI by whether it generates more tests faster. Another evaluates it by whether tests catch meaningful regressions. A senior QE lead evaluates it by whether the team’s overall detection capability has improved. These are three different problems. All three people are talking about “AI in QA” as if it’s one debate.

The adoption split, then, is a symptom. The underlying condition is that the team is operating without a risk-calibrated quality model. And that condition doesn’t go away if you mandate adoption or ban the tools. It follows the team.

What Common Metrics Miss

The standard QA metrics dashboard — coverage percentage, pass/fail rate, defect escape rate, cycle time — was designed for a world of deterministic test execution. You run known tests. You measure known outcomes. You compare to a threshold.

Those metrics were never designed to surface where the system is fragile, where test confidence is low, or where signal-to-noise ratios in the test suite have degraded. They don’t distinguish between a test that passed because the system is working correctly and a test that passed because it didn’t actually exercise the risky path.

According to Google’s Site Reliability Engineering principles, the distinction between testing coverage and testing confidence is foundational — yet most quality dashboards don’t operationalize confidence at all. The number tells you what ran. It doesn’t tell you what it means.

AI-assisted testing changes this equation, but only if the team knows what problem they’re solving. If you add AI test generation to a suite that already has low signal-to-noise, you get more tests with the same problem. If you apply AI anomaly detection without defining what counts as a meaningful anomaly in your context, you get alerts without action.

The teams succeeding with AI augmentation aren’t the ones who adopted the most tools. They’re the ones who got precise about what failure patterns they couldn’t see before — and used AI to close those specific gaps.

The AI Augmentation Layer: What It Actually Changes

AI doesn’t replace QA judgment. It changes where judgment gets applied.

In a conventional test process, most QE effort goes into writing and maintaining tests. Prioritization is informal — often based on which components feel risky based on recent history. Detection depends on whether a test was written to cover the scenario that actually failed.

AI augmentation shifts effort allocation. Classification models can rank which tests, test paths, or components carry the highest failure probability given recent change patterns and execution history. Anomaly detection can surface behavioral deviations that don’t trigger any written assertion — the kind of slow degradation that passes all tests and still breaks production. Risk reweighting can continuously adjust which areas of the system deserve more test attention as the system evolves.

None of this is automatic. Each mechanism requires calibration and organizational trust. But the directional change matters: AI augmentation moves QA from static coverage to dynamic risk sensing. The question shifts from “did we run enough tests?” to “are we sensing risk accurately?”

That is a fundamentally different job definition — and it explains some of the adoption friction. Engineers trained on coverage-centric models aren’t wrong about what they know. They’re being asked to value a different output without being given a framework for why the new output is more meaningful.

Enterprise Translation: Uneven Adoption, Uneven Exposure

Leadership often sees the QA adoption split as a people problem. Some teams are ahead, others are behind, the goal is to close the gap.

That framing misses the operational consequence.

When different parts of a QA organization are operating on different quality models — some using AI-augmented risk sensing, others using traditional coverage metrics — the organization’s overall risk visibility becomes inconsistent. A feature developed in one team’s release cycle may be evaluated against a high-signal, risk-calibrated model. A feature from another team enters production evaluated against pass rate alone.

From an engineering risk standpoint, that inconsistency is an exposure gap. You don’t know which releases carry undetected risk because the detection model wasn’t applied uniformly. And in interconnected systems — which most modern software platforms are — a fragile component released from one team’s pipeline can cascade into failures across components held to a higher detection standard.

The revenue exposure from this gap isn’t hypothetical. A 2023 analysis by the Consortium for IT Software Quality (CISQ) estimated the cost of poor software quality in the United States at over $2.4 trillion annually, with significant portions attributable to operational failures and undetected defects reaching production. Not all of that is a QA failure, but detection gaps are a meaningful contributor. [Source listed below.]

What Leaders Should Govern

The instinct for leadership facing this split is to pick a side: either push adoption faster or slow down and standardize. Both miss the real governance question.

The governance question is not “how many teams are using AI tools?” It’s “how consistently can this organization detect and quantify risk before release?”

That requires leaders to ask different questions from their QA and quality engineering leaders:

Not “what is our coverage percentage?” but “where is our detection confidence lowest, and why?”

Not “which teams have adopted AI?” but “which parts of the system are we least able to see clearly before release, and what’s the business exposure if those areas fail?”

A meaningful governance posture treats QA capability as a risk sensing function, not a compliance checkbox. It funds tooling, training, and calibration based on where risk exposure is highest — not based on which teams were earliest adopters.

It also means tracking a new class of leading indicators: test signal quality, detection latency (the gap between when a defect enters the system and when it’s detected), and confidence calibration across the suite. These indicators don’t fit neatly on today’s QA dashboards. That’s precisely why they matter.

The Takeaway

The QA adoption split isn’t a morale problem or a skills gap — though both may be present. It’s a signal that most quality engineering teams are operating without a shared, risk-calibrated definition of what “better” means.

AI augmentation offers real capability improvements in detection, prioritization, and risk sensing. But those improvements only materialize when the organization knows what problem it’s measuring and governs toward that measurement consistently.

Leadership that waits for adoption consensus will keep asking the wrong questions. Leadership that reframes the question — from adoption rate to risk visibility quality — will start seeing what their dashboards currently don’t.

Sources / Further Reading

- CISQ — The Cost of Poor Software Quality in the U.S. (2023 Report): https://www.it-cisq.org/

- Google SRE Book — Testing for Reliability: https://sre.google/sre-book/testing-reliability/

- IEEE Standard 829 — Software and System Test Documentation: https://standards.ieee.org/

- DORA Research — State of DevOps Report: https://dora.dev/research/