The dashboards looked clean.

Ninety-four percent test pass rate. Eighty-one percent code coverage. Build pipelines green across every environment. The engineering org had spent months hardening its test suite, and the numbers reflected that effort. Leadership reviewed the metrics in quarterly planning and felt good about the trajectory.

Then traffic spiked—a scheduled promotion, a regional surge, three concurrent batch jobs—and within forty minutes the system began degrading in ways nobody had anticipated. Not a catastrophic crash. Something slower and more damaging: partial failures, cascading timeouts, inconsistent data states that customers encountered before monitoring caught them.

The coverage metrics hadn’t lied. They had simply measured something other than what broke.

What Pass Rate Actually Measures

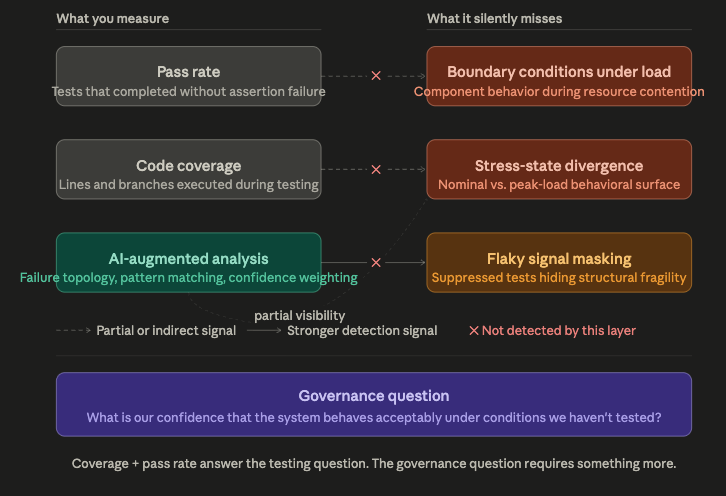

A pass rate measures the proportion of test cases that completed without assertion failure under the conditions in which they were run.

That last clause is doing most of the work.

“Under the conditions in which they were run” is almost always a controlled, low-contention environment. Single-threaded execution. Isolated dependencies. Predictable latency. Clean data state. The tests are designed to run reliably, which is a reasonable engineering goal—except when that reliability is achieved by removing the conditions that cause real failures.

Code coverage, similarly, measures whether a line or branch was executed during testing. It says nothing about whether that execution was meaningful, adversarial, or representative of production behavior. A test that touches a circuit breaker path without ever triggering it counts as coverage. A test that calls a retry handler with a mock that always succeeds counts as coverage. The metric lights green, and the exposure remains.

This is not an argument against coverage metrics. They are useful signals for finding completely untested code. The problem is the inference gap—the leap from “we have 80% coverage” to “we understand 80% of our risk.” Those two statements are not equivalent, and the distance between them is where silent failures live.

Three Blind Spots Coverage Can’t See

1. Boundary condition behavior under resource contention

Most tests validate logic. They verify that function A returns value B given input C. What they rarely test is what function A does when the database connection pool is at capacity, the memory allocator is under pressure, and three other service calls are competing for the same timeout budget.

This is the behavioral gap between unit correctness and system resilience. A component can pass every test in isolation and fail predictably under production load—not because the logic is wrong, but because the interaction surface with the rest of the system is untested. Coverage doesn’t see this. Pass rates don’t track it. It is invisible until the system is under stress.

2. Stress-state divergence

Systems exhibit different behaviors at rest and under load. Connection pools degrade gracefully—until they don’t, and then they fail sharply. Cache hit rates hold steady—until request diversity spikes and they collapse. Queue workers process consistently—until back-pressure builds and they start dropping acknowledgments.

These divergences follow patterns. They are not random. But they don’t appear in unit or integration tests running against synthetic, low-load environments. The system has what might be called a stress state: a regime of behavior it enters under real conditions that is essentially unmapped by conventional testing. High coverage in nominal-state testing tells you nothing about the stress-state surface.

3. Flaky signal noise masking structural fragility

Flaky tests—tests that sometimes pass and sometimes fail without changes to the code—are commonly treated as an annoyance to be suppressed. Teams mark them as quarantined, retried, or skipped. The pass rate stays high. The dashboard stays green.

But flakiness is often a signal, not just noise. A test that intermittently fails under concurrency may be detecting a real race condition that only surfaces when timing lines up a particular way. A test that fails sporadically against a shared environment may be revealing state pollution between tests—a sign that components are not as isolated as assumed. Suppressing flaky tests doesn’t remove the underlying exposure; it removes the visibility.

What AI Adds to the Detection Picture

Pattern recognition on failure topology changes what’s detectable—not by replacing tests, but by analyzing what the test record actually reveals.

Where traditional metrics count pass/fail outcomes, AI-augmented analysis can identify where failures cluster in the dependency graph, how failure rates shift under different environmental conditions, and which combinations of test states correlate with downstream production incidents.

The mechanism here is classification and anomaly detection applied to the structural shape of test failure data. A model trained on historical failure patterns can flag when the topology of today’s failures resembles pre-incident patterns, even if the raw pass rate looks normal. It can distinguish between systemic fragility (failures that cluster around a particular service boundary or data path) and random noise (failures distributed uniformly across unrelated tests).

AI also contributes meaningfully to confidence scoring. Rather than treating “81% coverage” as a single flat signal, AI can weight that number by asking: coverage of what? Coverage of the high-traffic paths? Of the error-handling branches? Of the concurrency surface? Coverage of code that has never failed means something different than coverage of code that has failed under stress. AI reweights the coverage signal by failure history and criticality, producing a risk-adjusted confidence estimate rather than a raw percentage.

This is not a claim that AI catches everything coverage misses. It doesn’t. The stress-state gap, in particular, still requires investment in realistic load testing and chaos-style fault injection. AI augments detection; it doesn’t substitute for architectural rigor.

What This Means at the Enterprise Level

When a leadership team looks at “94% pass rate” in a quarterly review, they are not seeing risk. They are seeing a count of controlled experiments that passed under favorable conditions.

The gap between that number and actual system resilience is a form of invisible liability. It doesn’t appear on any dashboard. It doesn’t surface in any sprint report. It accumulates silently until a peak-load event, a competitive traffic spike, or a third-party dependency failure exposes it.

The enterprise cost of this gap isn’t just downtime. It is customer trust eroded during the period of degraded experience, revenue lost during transaction failures, incident response cost, and—increasingly in regulated sectors—compliance exposure when systems handling sensitive transactions behave unexpectedly under stress.

Organizations that govern by coverage metrics alone are, in effect, governing by the absence of evidence. They know what their tests checked. They do not know what their tests missed.

Governing Exposure, Not Just Outcomes

The metric shift this requires is not complex, but it is cultural.

Coverage and pass rate remain useful as baseline hygiene signals. What they need is supplementation with questions that probe the boundary between tested behavior and real system behavior:

What proportion of our high-traffic paths have been tested under realistic concurrency? This question probes the stress-state gap directly. The answer is usually lower than teams expect.

What is our flaky test policy, and are we treating flakiness as signal? Quarantining flaky tests without root-cause investigation removes a detection layer that was functioning, imperfectly, as a risk signal.

How are we measuring coverage of error paths, not just happy paths? Error handling, retry logic, fallback behavior—these are the paths that matter most under stress, and they are disproportionately absent from coverage-focused test suites.

For investment decisions, the framing that matters is not “how much testing do we have” but “what is our confidence that the system will behave acceptably under conditions we haven’t tested?” That is a risk question, not a coverage question. And it is the question that determines whether green dashboards represent genuine resilience—or just well-managed appearances.

Engineering teams can produce good coverage numbers without meaningfully reducing exposure. Leadership that governs only by those numbers will find out the difference when it costs the most to learn it.

Sources / Further Reading

- NIST Software Testing Standards: https://www.nist.gov/software-testing

- Google Testing Blog — Flaky Tests series: https://testing.googleblog.com

- Martin Fowler — TestCoverage: https://martinfowler.com/bliki/TestCoverage.html

- Netflix TechBlog — Chaos Engineering practices: https://netflixtechblog.com

- IEEE Software — “Coverage is Not Correctness” discussions in Reliable Software Engineering literature