A large software platform enters a high-value demand window. Traffic climbs fast. Checkout volume rises. Shared services run hotter than usual. Test suites passed earlier in the day. Deployment telemetry looked normal. Error rates are still within tolerance.

Then, under sustained load, three services degrade in sequence.

Not because one obvious defect slipped through. Not because testing never happened. The failure emerges because the system was carrying exposure that standard signals did not make visible. The checks were green. The risk was not.

That pattern is common enough to deserve better language.

Modern engineering teams are surrounded by metrics: test pass rates, code coverage, deployment frequency, error counts, and incident trends. These signals matter. But most of them answer narrower questions than teams and leaders want them to answer. They tell you what executed, what passed, what shipped, or what has already gone wrong. They do not reliably tell you how much hidden engineering risk the system is carrying right now.

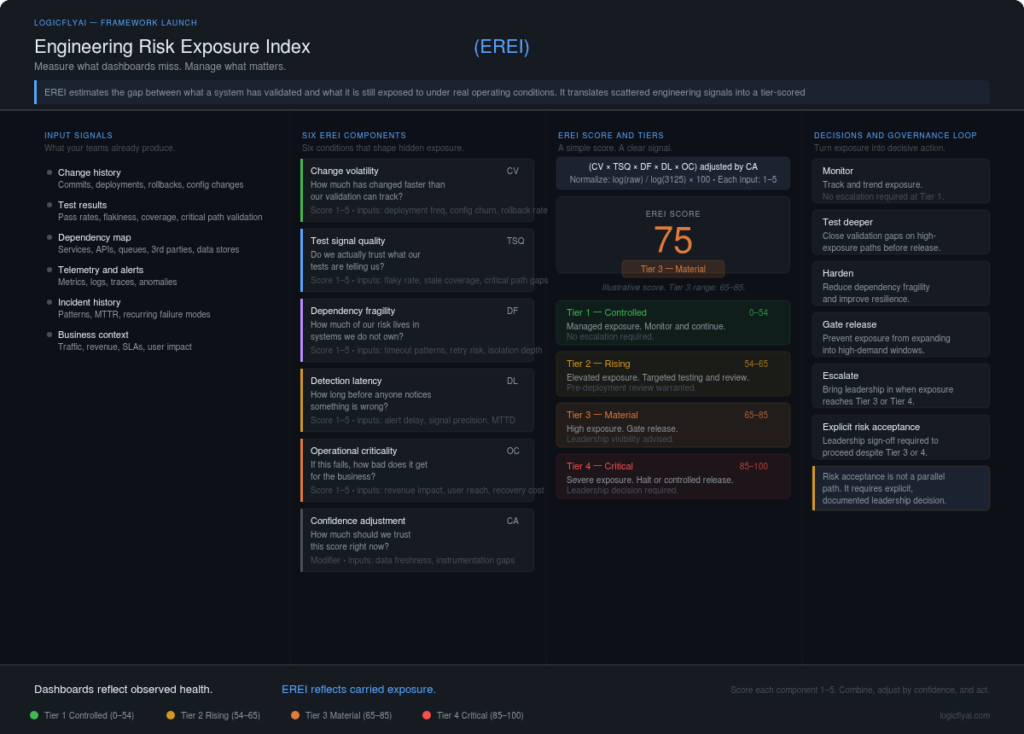

That is the problem the Engineering Risk Exposure Index (EREI) is designed to address.

EREI is a structured framework for estimating the gap between what a system has validated and what it is still exposed to under real operating conditions. In plain terms: it helps teams measure hidden exposure before it becomes visible failure.

It is not a replacement for testing, observability, incident review, or human judgment. It is a risk-sensing layer that helps engineers, quality teams, and leaders reason about exposure before an outage, escalation, or trust event forces the conversation.

Why Current Metrics Create a False Sense of Control

The issue is not lack of data. The issue is category error.

A high test pass rate tells you that selected checks produced expected results under known conditions. It does not tell you whether those checks represented the highest-risk paths, realistic concurrency, or stress-sensitive failure modes.

A code coverage number tells you which lines were executed during testing. It does not tell you whether the right conditions were exercised, whether resilience paths were tested, or whether downstream fragility was represented at all.

A deployment frequency metric says something useful about flow and delivery behavior. It says very little, by itself, about the exposure profile of what just changed.

A low defect count can mean good engineering. It can also mean weak discovery. The metric cannot tell you which.

An incident count measures realized failure. It does not measure accumulated exposure.

These metrics are not wrong. They are partial. Trouble starts when partial signals are treated as complete truth.

That is how teams end up in a structurally dangerous position: the operating story sounds healthy while the actual resilience posture is weakening. Dashboards look acceptable because visible thresholds have not been crossed. Meanwhile, the system may be carrying weak validation signals, long detection delays, high change volatility, tight dependency coupling, and serious business consequence — none of which appear in a pass-rate summary.

EREI exists to make that gap discussable, scoreable, and governable.

What EREI Measures

EREI does not ask “Did anything fail yet?” It asks a harder and more useful question:

Given the nature of the system, its recent change pattern, the quality of its validation signals, its dependency posture, its detection speed, and its business criticality — how much meaningful exposure are we carrying right now?

That is a different operating model. Instead of only asking whether a release passed, teams can ask whether the release is entering production with controlled exposure, rising exposure, material exposure, or critical exposure. Instead of only tracking defects found, leaders can ask whether important systems are being run with weak evidence and slow detection.

That shift matters because many expensive failures begin as unmeasured exposure — not as visible incident symptoms.

The Six EREI Components

Each component is defined below. Practitioners will want the full definitions. Leaders can follow the worked example and formula walkthrough that follow, and return to the definitions as needed.

1. Change Volatility (CV) Measures how unstable the recent change pattern is relative to the system’s validated baseline.

Signals include deployment frequency, size and concentration of recent changes in high-traffic paths, configuration churn, schema modifications, rollback frequency, and cross-service coupling introduced by recent work.

The logic is direct: the more volatile the recent change pattern, the greater the chance that exposure has shifted faster than validation has caught up. A system that looked stable two weeks ago may carry a very different risk posture after a configuration change, a schema adjustment, and a new dependency path — even if the test suite still passes.

Plain English: How much has changed faster than our validation can track?

2. Test Signal Quality (TSQ) Measures how trustworthy current validation signals actually are — not how many tests ran, but whether the tests that ran provide genuine assurance about the paths that matter most.

Not all passing tests provide equal confidence. A suite with weak coverage of real risk paths, high flaky test rates, or a large layer of stale tests produces motion without clarity. The tests are green; the confidence is not earned.

Signals include pass/fail distributions, flaky test rates, stale coverage in recently changed areas, critical-path representation, and mismatch between where production failures historically occur and where test effort is concentrated.

Plain English: Do we actually trust what our tests are telling us?

3. Dependency Fragility (DF) Measures how much risk the system imports through its connected services, APIs, data stores, and external platforms.

Modern systems rarely fail in isolation. Exposure moves through third-party services, internal platforms, queues, shared databases, identity systems, and network boundaries. A service can appear perfectly stable in isolation while remaining highly exposed through a fragile upstream dependency it does not control.

Signals include timeout patterns, retry amplification risk, historical instability of specific dependencies, failover behavior, and the degree of isolation between coupled components.

Plain English: How much of our risk lives in systems we do not own?

4. Detection Latency (DL) Measures how quickly meaningful degradation would actually be recognized by existing monitoring and alerting infrastructure.

Many teams collect substantial telemetry and still detect important failure patterns late. Detection Latency is not just about whether monitoring exists — it is about whether the monitoring is useful. An alert that fires after a queue has been backing up for twenty minutes is not early detection. It is confirmation that damage is already in progress.

Signals include alert delay, weak symptom-to-cause visibility, poor log correlation, low signal precision, and the real-world time between anomaly onset and confident team recognition.

Plain English: How long before anyone notices something is wrong?

5. Operational Criticality (OC) Measures the business consequence of failure for this specific system in its current context.

Not every technical weakness deserves the same response. A low-volume internal reporting tool and a customer-facing payment flow should not carry the same decision weight. Operational Criticality translates technical exposure into enterprise consequence — customer reach, revenue sensitivity, trust sensitivity, recovery difficulty, and any regulatory or contractual consequence that would shape leadership response.

Plain English: If this fails, how bad does it get for the business?

6. Confidence Adjustment (CA) Measures how much trust to place in the EREI score itself.

This is one of the most important components in the model, and one of the most often ignored in engineering risk discussions. A score derived from stale data, weak instrumentation, or conflicting signals should carry less decision weight than a score built from clean, recent, consistent inputs.

Low confidence does not mean low risk. In practice, it often signals the opposite — an organization flying with weak sightlines where those visibility gaps are themselves part of the exposure.

Signals include data freshness, instrumentation gaps, unresolved conflicts across indicators, and known blind spots in observability or service ownership.

Plain English: How much should we trust this score right now?

Scoring Logic and Tier Outputs

EREI uses a lightweight scoring model designed to be readable by engineers and leaders alike. Each component is scored 1–5:

- 1 = low concern

- 3 = moderate concern

- 5 = high concern

The core formula:

EREI Score = (CV × TSQ × DF × DL × OC) adjusted by CA

Confidence Adjustment operates as a review modifier rather than a direct multiplier:

- High confidence: use the score as calculated

- Medium confidence: widen review scope; treat borderline tiers more cautiously

- Low confidence: raise escalation sensitivity — weak visibility is itself material exposure

The exact arithmetic can vary by organization. The value of EREI is not false precision. It is a shared structure that makes hidden exposure easier to compare, explain, and govern.

EREI maps to four exposure tiers:

| EREI Tier | Meaning | Default Decision Trigger |

|---|---|---|

| Tier 1 — Controlled | Managed exposure | Monitor; no escalation required |

| Tier 2 — Rising | Elevated exposure | Targeted testing; pre-deployment review |

| Tier 3 — Material | High exposure | Gated deployment; executive visibility advised |

| Tier 4 — Critical | Severe exposure | Halt or controlled release; leadership decision required |

Thresholds should be calibrated to the system and the organization’s risk posture. A trust-sensitive financial workflow should not share the same practical thresholds as a low-consequence internal reporting tool.

A Worked Example: Scoring a Payment Service Before a Peak-Load Event

Consider a customer-facing transaction service preparing for a high-demand release window.

Recent work introduced configuration changes, modified a schema path, and added a new third-party dependency call. Tests are mostly green, but critical-path concurrency scenarios are thin. One external dependency has shown intermittent latency in recent weeks. Monitoring exists, but earlier incidents required manual review before teams trusted the signal. The service handles payment confirmation.

| Component | Score | Rationale |

|---|---|---|

| Change Volatility | 4 | Config changes, schema modification, new dependency path — all in the last sprint |

| Test Signal Quality | 4 | High pass rate but documented flakiness; peak-load concurrency scenarios absent |

| Dependency Fragility | 3 | One unstable dependency with latency history; partial isolation in place |

| Detection Latency | 4 | Alerts present but require manual validation before teams act |

| Operational Criticality | 5 | Customer-facing payment confirmation; direct revenue and trust consequence |

| Confidence Adjustment | Medium | Telemetry gaps remain; parts of the test suite are stale in recently changed areas |

Raw score: 4 × 4 × 3 × 4 × 5 = 960

Because the raw product of five 1–5 inputs can range from 1 to 3,125, EREI normalizes this onto a 1–100 governance scale using a simple log normalization:

Normalized score = (log(raw) / log(3125)) × 100

Applying that: log(960) / log(3125) × 100 ≈ 86 out of 100

With medium Confidence Adjustment, the operational interpretation is: treat this as the upper bound of Tier 3, evaluate for Tier 4 before the release window opens.

Dashboards reflect observed health. EREI reflects carried exposure. Those are not the same condition, and treating them as though they are is how preventable failures slip through.

How the Formula Works in Practice: A Three-System Comparison

The formula’s real power becomes visible when you score multiple systems side by side and use the results to drive a prioritization decision. Here is a realistic cross-system comparison across three services ahead of the same release cycle.

System A: Payment confirmation service (the example above) CV=4, TSQ=4, DF=3, DL=4, OC=5 → Raw: 960 → Normalized: ~86 → CA: Medium → Operational tier: Tier 3, escalate toward Tier 4

System B: Internal analytics dashboard CV=2, TSQ=3, DF=2, DL=3, OC=2 → Raw: 72 → Normalized: ~55 → CA: High → Operational tier: Tier 1, monitor

System C: Checkout session management service CV=5, TSQ=4, DF=4, DL=5, OC=5 → Raw: 2000 → Normalized: ~96 → CA: Low → Operational tier: Tier 4, halt or gate

Now here is the diagram showing all three systems plotted across the tier scale:

What the comparison tells a decision-maker:

System B scores 55 with high Confidence Adjustment. The evidence is clean and the exposure is moderate. This system can proceed normally. No deployment gate required.

System A scores 86 with medium Confidence Adjustment. It sits at the boundary of Tier 3 and Tier 4. The medium confidence means the team does not have full visibility — which itself is a risk signal. The recommended call is: do not deploy this service into the peak-load window without executive awareness and targeted validation on the dependency and concurrency gaps first.

System C scores 96 with low Confidence Adjustment. This is the most important finding in the comparison. A score of 96 would already warrant a halt or controlled release. Low confidence makes it worse — the team is making decisions about a highly critical, heavily changed, poorly monitored checkout service with incomplete evidence. Low CA on a high score is not a reason to feel better about the number. It is a reason to treat the real exposure as potentially higher than the model can currently see. This system should not enter the high-demand window in its current state.

The business conclusion from this three-system scan is concrete: one system is clear to deploy, one requires conditional gating and targeted testing, and one requires a leadership decision before the release window opens. That is a governance output — not just an engineering status report.

This is the difference between outcome governance and exposure governance. Before the event, three systems are scored, one is stopped, one is conditionally hardened. After the event — if no scoring happened — the conversation begins with an incident report.

Now the updated framework diagram reflecting the full six-component model and governance loop:

Where AI Changes the Signal

EREI can be used manually, and many teams should start there. But the model is well suited to AI augmentation — and the honest case for it is narrow and practical.

Engineering teams generate far more signal than they can manually interpret in real time. Pre-release test execution produces thousands of results. Monitoring pipelines emit continuous telemetry. Dependency health varies across dozens of connected systems. The bottleneck is not signal volume — it is signal interpretation, reweighting, and routing. That is where AI earns its place.

On Change Volatility: AI can identify change patterns that look routine at the ticket or commit level but are riskier in aggregate — repeated modifications across tightly coupled components, or quiet concentration of changes in historically fragile paths.

On Test Signal Quality: AI can classify flaky patterns, identify stale or redundant tests, and compare changed code paths with the actual shape of test attention — helping teams see where test motion is masking weak assurance.

On Dependency Fragility: Graph-based analysis can map dependency relationships and weight them by change frequency, failure history, and coupling density, surfacing imported risk that local teams may underestimate.

On Detection Latency: AI can recognize precursor patterns in telemetry before fixed alert thresholds are crossed, shortening the time between degradation and confident recognition.

On Operational Criticality: AI can help connect technical systems with business workflows, user impact patterns, and downstream consequences so that exposure is prioritized more intelligently.

On Confidence Adjustment: AI can compare evidence quality across signals and flag when the organization is making judgments from stale, conflicting, or incomplete inputs — especially when dashboards look clean but the evidence base is thin.

Across all six areas, AI works best as a risk reweighting and confidence support layer. It helps teams decide where to look first, which green signals deserve less trust, and where exposure is changing faster than the dashboard suggests.

Tradeoffs and Limits

A system launch is only credible if it states its limits clearly.

EREI can mislead if treated as objective truth. It is structured judgment applied to available evidence — not a measurement of ground truth. Teams that treat a Tier 1 score as a guarantee, or a Tier 4 score as a reason to panic without investigation, are misusing the framework.

Used poorly, EREI becomes another status artifact — a number generated periodically, reviewed superficially, and filed away. Used well, it becomes a disciplined way to surface where confidence is thinner than teams assume. That distinction is a governance choice, not a technical one.

Input quality determines output quality. EREI can be skewed by poor data, inconsistent scoring across teams, weak service inventories, or organizational pressure to keep exposure scores artificially low. The framework is only as honest as the people operating it.

EREI does not replace deep engineering work. It does not substitute for architecture review, testing strategy, observability design, or incident analysis. It is a risk-sensing layer, not a replacement for the practices that address risk at the source.

EREI is a point-in-time estimate. Exposure changes with every deployment, infrastructure shift, and traffic change. A quarterly score with no operational follow-through quickly becomes decorative.

Some failures will still surprise you. The aim is not certainty. It is better exposure visibility — reducing the size of the gap between what organizations believe their risk posture is and what it actually is.

What EREI Changes for Engineers

For engineers, EREI shifts the conversation from activity to exposure.

It forces a different set of questions: Are we actually reducing risk, or just increasing testing motion? Which tests matter most to exposure, not just coverage volume? Which dependency paths deserve hardening work first? Where are we making deployment decisions with weak confidence sightlines?

One of the quieter advantages of this framework is that it gives engineering teams permission to be selective for the right reasons. Not every failing test represents equal exposure. Not every dependency warrants equal hardening investment. Not every service carries the same consequence weight. EREI provides a structured basis for those prioritization decisions — one that engineering leadership can defend upward and that engineers can work from without second-guessing.

The test suite that matters most is not the largest one. It is the one with the highest signal quality on the highest-exposure paths.

What EREI Changes for Leaders

For leaders, EREI translates engineering conditions into decision language.

A defect count is a lagging indicator. An incident report is even later. Both tell you what has already happened. EREI offers a way to discuss trust, revenue sensitivity, operational posture, and investment need before the damage is visible.

A Tier 2 system may justify targeted hardening before a high-value release. A Tier 3 system warrants leadership visibility and a deployment gate conversation. A Tier 4 system — particularly one with low Confidence Adjustment — is no longer purely a technical question. It is a question about trust, revenue sensitivity, and organizational risk acceptance.

When Tier 3 or Tier 4 systems are deployed into high-demand windows without executive visibility, that is not an engineering failure. It is a governance failure. The exposure existed. The language to surface it did not.

Adoption Path: Start Small, Govern Early

EREI is designed to be adopted incrementally.

Phase 1 — Manual baseline. Choose one critical workflow or service. Score all six components using existing knowledge. The disagreements that surface are often as valuable as the score itself — they reveal where teams hold different assumptions about exposure.

Phase 2 — Integrate existing signals. Connect EREI inputs to what teams already collect: test runs, deployment history, dependency health, incident patterns, observability data.

Phase 3 — Add AI where signal quality is weakest. Test Signal Quality, Detection Latency, and Dependency Fragility are often strong starting points because teams frequently struggle with noise, delay, and imported risk in these areas.

Phase 4 — Governance integration. At this stage, EREI becomes more than a team exercise. It informs release review, resilience planning, hardening priorities, leadership visibility, and investment decisions.

The adoption path is: Start small → integrate into routine decisions → govern exposure explicitly.

Governing Exposure, Not Just Outcomes

Most organizations govern outcomes. They review incidents, outages, missed commitments, major defects, and customer escalations. Those processes matter. But they are structurally late. By the time outcome review begins, the exposure that created the event has often been present for days, weeks, or longer.

EREI supports a different governance posture:

Not just: Did anything break? But: What exposure are we carrying right now, and is it consistent with our risk posture?

Not just: Was the deployment successful? But: Did we knowingly release with Tier 3 or Tier 4 exposure, and who understood that decision?

Dashboards reflect observed health. EREI reflects carried exposure. Those are not the same condition — and the distance between them is where most preventable failures live.

Because the most dangerous engineering risk is often not the defect you can already see.

It is the exposure you have not yet learned to measure.

Sources / Further Reading

- DORA State of DevOps Reports — https://dora.dev/research/

- ISO/IEC 25010: Systems and Software Quality Models — https://www.iso.org/standard/35733.html

- NIST SP 800-30: Guide for Conducting Risk Assessments — https://csrc.nist.gov/publications/detail/sp/800-30/rev-1/final

- Google SRE Book, Chapter 3: Embracing Risk — https://sre.google/sre-book/embracing-risk/

- Accelerate: The Science of Lean Software and DevOps (Forsgren, Humble, Kim) — via standard book channels